Communication to Unreal

Common Communication Protocols:

Spout

Latency free texture sharing, local connection.

Spout has been a pioneer in texture sharing across platforms since i began working in new media art. It is supported by the community and up until Unreal 5, plugins were provided by many sources on github. Today you can find it packaged in a popular plugin, Offworldlive, which you can find more information below.

NDI

Texture sharing, network connections.

NDI, Network Device Interface, has developed from live broadcasting used in TV and film, created by NewTek. Its mainly used across a defined local network. If you can correctly route within a network, you can get many signals sending video to many sources.

There should be a disclaimer, NDI has a tendency to drop connections if not stable. Please make sure you are using static IPs that do not change. This is the only way a reliable connection is ensured.

- https://ndi.video/

- https://ndi.video/tools/download/

- https://docs.derivative.ca/NDI

- https://derivative.ca/UserGuide/NDI

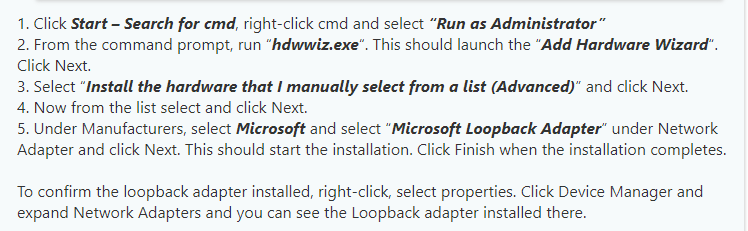

It is possible to create a loopback adapter to get a local connection on the same machine allowing you to send an NDI signal directly from Touch to Unreal and vis versa. Check out the instructions below to enable the built in Microsoft workflow.

OSC

Broadcasting common data types: int, floats, strings.

OSC, Open Sound Control, is a protocol orginally used in networking sound synthesizers. It has expanded to be used in wide range of applications, and is delivered via ethernet and the internet using UDP/IP. This connection does not require a handshake and is listening for data sent to specific addresses, classified by port numbers. It is known that this protocol is error prone, and should be used when a syncronization is not required.

In todays course, we will focus mainly on this protocol.

DMX

Event industry standard for communication with intelligent devices.

Take a look at Wielands course in the summer bundle to learn more about this topic!

If you’re interested in going down this route, it should be known that you only must be aware of the steps need to parse the data. This requires the knowledge of building patch sheets and understanding the workflow of the DMX plugin in Unreal. It is simple enough to send DMX data from Touch, and once the application of the process is understood, thinking out of the box can provide interesting results. But in comparison to the other protocols, i think its better to leave this for real world intelligent fixtures. When using in a previz situation for stage lighting, this is the easiest to sync your shows into Unreal.

Another benefit to using this protocol, is the ability to in tandem use Unreal Engine’s take recorder. This feature listens to all data chosen to be heard and records in realtime and caches it into a level sequence, to be used for playback without the need of a connected device or worry of latency and dropped frames. While im mentioning it in this topic concerning DMX, because the workflow is very nicely integrated, it is also possible to record almost anything in Unreal so that the playback is always consistent.

3rd Party Solutions:

- Offworldlive – Offering Spout and NDI capabilites. This product is free for non commercial use, but on higher end projects you must consider that cost. It is the quickest solution to texture sharing without knowing the intricacies of how things work natively. I find myself using this quite often. Once enabled, you have many Unreal actors assisting you in the process.

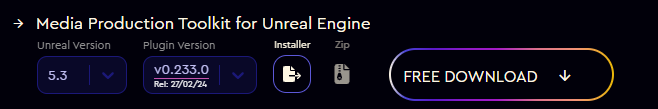

- OSC Plugin – A different approach outside of native support.

- Carbon for Unreal – DMX previz suite.

There are many ways to connect to Unreal. It really depends on your needs. Its always good to understand what youre trying to achieve and find the best fit for that application.

Realtime Workflows:

As all of you have experienced in Touch, resource management is the game we play. Since both Unreal and Touch are operating on a single thread normally, you must find ways to maintain your FPS. Both applications want to have focus as this is dependent to claim the highest priority on your computers resources.

This is why it should be clear on your needs to figure out what is working best for you.

I enjoy using TouchDesigner as a controller to manipulate virtual worlds. For my needs, i need both in focus. It works better if im splitting the workload between two machines that are synced. One running touch and the other running unreal.

This is the flow that Touch Engine tries to condense. If you are aware how and what youre doing 100% in your Tox that youve loaded into Unreal, this may be the path for you.

if youre using a lighting desk, normally this is with hardware, it is possible to hold focus on Unreal while operating physical hardware. This is the same for MIDI devices. So if you have stored parameter mapping to any device sending MIDI into Touch and do not need to see what is happening, Touch Engine provides again a solution.

In the end, especially in a setting where hands on interaction with clear visibility of your environment is close to you, i would recommend to operate such a system using two machines. This is to say that it is possible to test for MVPs(minimum viable product) while working on one computer. This course, for the sake of staying more focused on the software, we will do our job on one.